Many experiments only recruited between 30 and 50 participants, which limits the statistical power of the experiments and the generalizability of the results. In recent years, participants were often recruited from online portals like Amazon Mechanical Turk adds further to the artificiality of the experiments. The general notion of WEIRD (western, educated, industrialised, rich and democratic) participants and the issues of validity in psychology experiments are present in most of the previous work on anchoring bias too. Such a setting does not encourage participants to concentrate fully on the task at hand, given pay is frequently fixed-rate and non-performance-related. Moreover, participants were often incentivised to take part through monetary reimbursement or course credit.

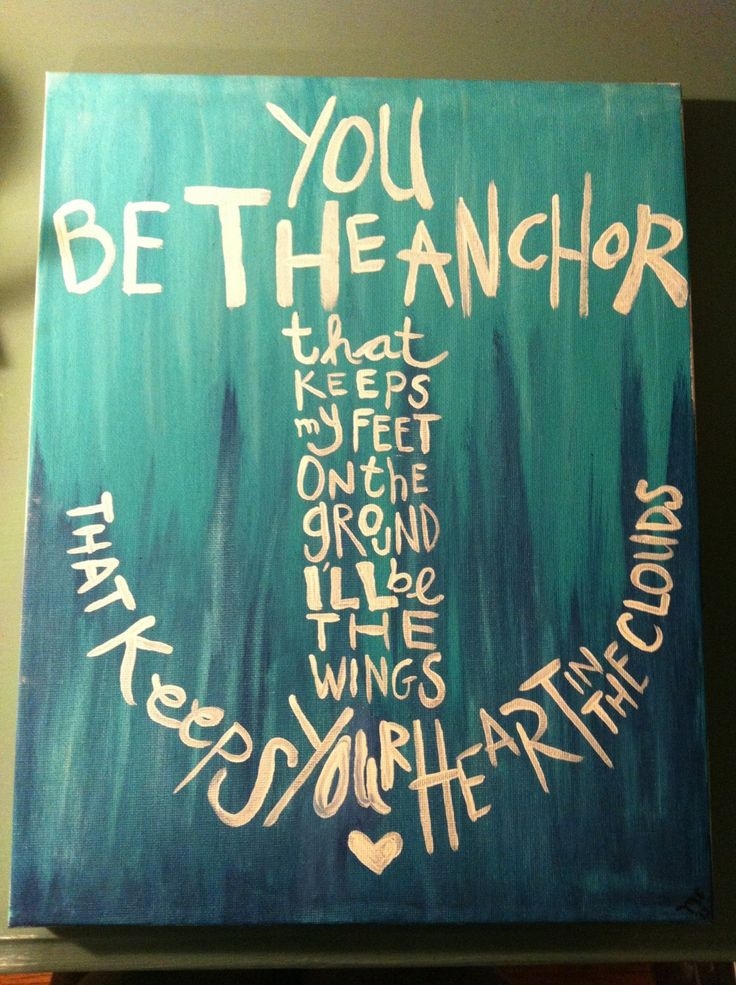

With the assumption that research on social psychology should inform and shape policy and regulations towards information systems (such as social media), it is important to bring the experiments as close as possible to daily life scenarios where those policies would apply. Few of these questions, however, seem particularly engaging or representative of everyday situations. For example, subjects have been asked to estimate the percentage of African countries in the United Nations, the length of the Mississippi river, Gandhi’s age at death, and the number of calories in a strawberry. Often experiments have been limited to single questions examining participants general knowledge. Most experiments investigating anchoring effects have been limited in their design and implementation. Typically, anchoring bias occurs when numeric anchors are provided, although some research has also investigated the effect of non-numeric anchors. This effect is pervasive and robust in a variety of experimental settings (see and real-world contexts, including in courtroom sentencing, in negotiations, in financial market decisions, in property pricing, and in judging the probability of the outbreak of a nuclear war. The term ‘anchoring’ can therefore be understood as people’s tendency to rely heavily on these prior values (or ‘anchors’) when making decisions. the value of a car), the resulting judgement tends to be similar to a previously encountered value (e.g. Tversky and Kahneman observed that in situations where people make estimates or predictions (e.g. This paper examines one of these effects: anchoring. show that depending on what online platform is used, people may be exposed to extremely different information, which can, in turn, result in the development of “social bubbles” or even changes in their emotional state. Given the black-box nature of the algorithms that drive searching and selecting relevant information on the Internet, it is left to large Internet corporations managing those algorithms to decide which information is “valuable” and therefore displayed to users.

Examples of cognitive bias include the anchoring effect (that is, the influence on decisions of the first piece of information encountered), the availability heuristic (where estimates of the probability of an outcome depend on ease of access to that information), the framing effect (the presentation of identical information in different ways), and confirmation bias (a focus on information that supports a pre-existing position), among others. Commonly referred to as cognitive biases, these errors are the result of non-rational information processing.

However, as has been shown by Tversky and Kahneman these shortcuts come at a cost: to be able to quickly solve a problem, certain information will be simplified, some ignored, and estimations will be made, thus increasing the likelihood of systematic errors in decisions. Heuristics are mental shortcuts that enable us to arrive at solutions to complex tasks or problems with minimal effort.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed